Reddit followed Twitter’s path by blocking third-party applications and transformed its sharing space into an absolute network of control.

On June 30, 2023 (in two days from publishing this) the changes will be effective.

Third-party applications will disappear and I estimate about 30% of the content

of the social networkof the institutional surveillance service.

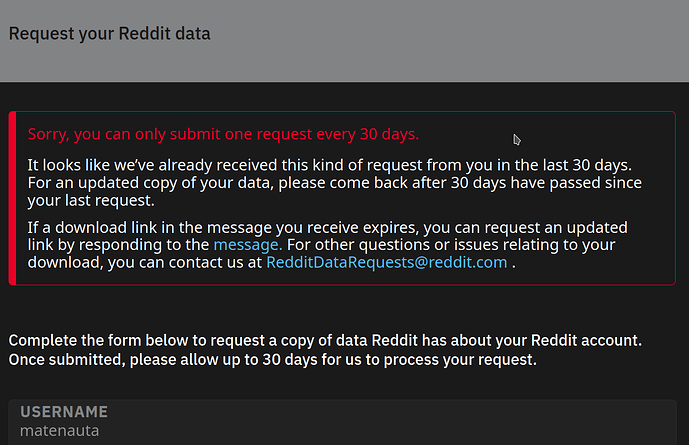

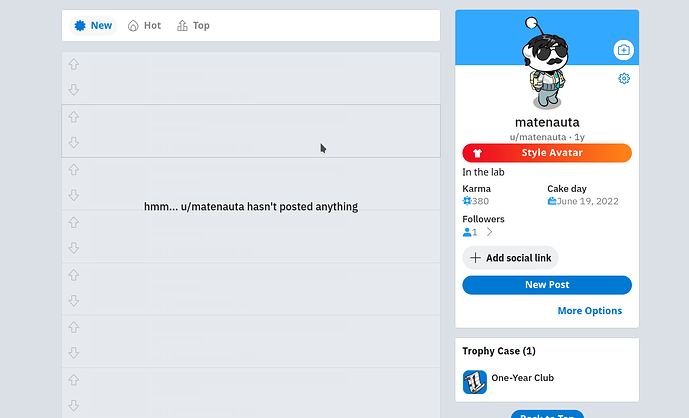

Reddit has a button that would eventually give you your data, but it doesn’t work. I used it almost a month ago and so far I haven’t had any responses:

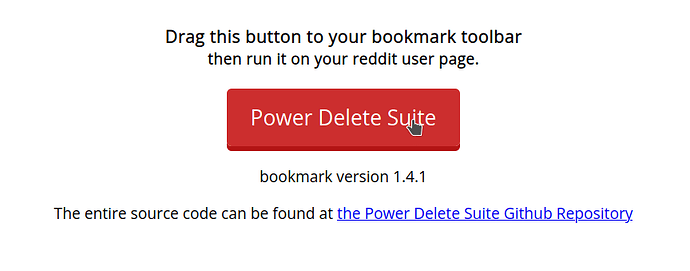

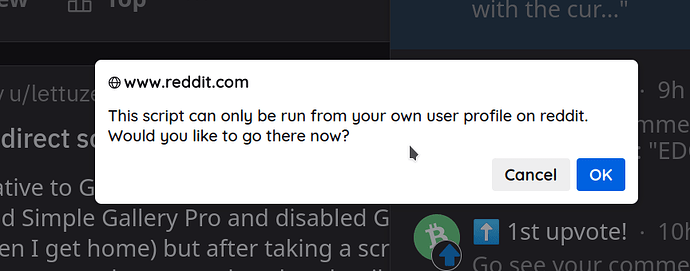

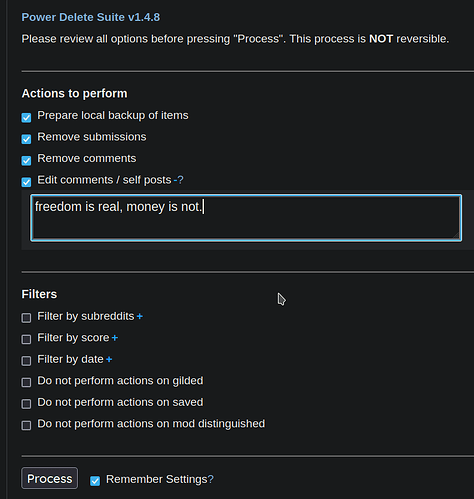

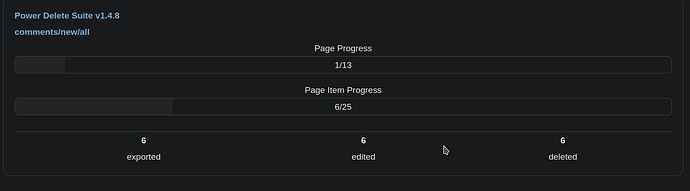

I’m copying the simplest and fastest alternative I found to download comments and personal information from Reddit.

It’s for Debian (Linux) but you can use it on Windows or Mac because Python is cross-platform.

First of all, check your preferences

Because if you didn’t check it before, you collaborated with several servers, services and institutions, giving them permission to make a lot of money with your time:

I do NOT want to appear in search engines, personalize my experience be sold junk, or recommendations have my screen polluted with ads.

Continue after deactivating that nonsense from your profile

Download data from Reddit

- Install Python if you don’t have it on your system, and then the Python packages

pipxandreddit-user-to-sqlite.

apt install python3

apt install pip

pip install pipx

pipx install reddit-user-to-sqlite

- Create the

metadata.jsonfile with this code, in the folder where you are going to download your data:

{

"databases": {

"reddit": {

"tables": {

"comments": {

"sort_desc": "timestamp",

"plugins": {

"datasette-render-markdown": {

"columns": ["text"]

},

"datasette-render-timestamps": {

"columns": ["timestamp"]

}

}

},

"posts": {

"sort_desc": "timestamp",

"plugins": {

"datasette-render-markdown": {

"columns": ["text"]

},

"datasette-render-timestamps": {

"columns": ["timestamp"]

}

}

},

"subreddits": {

"sort": "name"

}

}

}

}

}

-

Run

reddit-user-to-sqliteand view the downloaded information in sqlite format (.dbfile) preferably indatasetteor SQLiteBrowser. -

Browse the dump and do whatever you want with the info, you can export it to JSON and eventually link it to other projects

Commands without output

PC@PC:~$ mkdir reddit

PC@PC:~$ cd reddit

PC@PC:~/reddit$ pip install pipx

PC@PC:~/reddit$ pipx install reddit-user-to-sqlite

PC@PC:~/reddit$ reddit-user-to-sqlite user satoshinotdead

PC@PC:~/reddit$ pipx install datasette

PC@PC:~/reddit$ nano metadata.json

PC@PC:~/reddit$ datasette reddit.db --metadata metadata.json

Commands with output

PC@PC:~$ mkdir reddit

PC@PC:~$ cd reddit

PC@PC:~/reddit$ pipx install reddit-user-to-sqlite

bash: pipx: command not found

PC@PC:~/reddit$ pip install pipx

Defaulting to user installation because normal site-packages is not writeable

Collecting pipx

Downloading pipx-1.2.0-py3-none-any.whl (57 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 57.8/57.8 kB 744.3 kB/s eta 0:00:00

Collecting userpath>=1.6.0

Downloading userpath-1.8.0-py3-none-any.whl (9.0 kB)

Requirement already satisfied: packaging>=20.0 in /usr/lib/python3/dist-packages (from pipx) (20.9)

Collecting argcomplete>=1.9.4

Downloading argcomplete-3.1.1-py3-none-any.whl (41 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 41.5/41.5 kB 2.9 MB/s eta 0:00:00

Collecting click

Downloading click-8.1.3-py3-none-any.whl (96 kB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 96.6/96.6 kB 1.9 MB/s eta 0:00:00

Installing collected packages: click, argcomplete, userpath, pipx

Successfully installed argcomplete-3.1.1 click-8.1.3 pipx-1.2.0 userpath-1.8.0

[notice] A new release of pip is available: 23.0.1 -> 23.1.2

[notice] To update, run: python3 -m pip install --upgrade pip

PC@PC:~/reddit$ pipx install reddit-user-to-sqlite

installed package reddit-user-to-sqlite 0.4.1, installed using Python 3.9.2

These apps are now globally available

- reddit-user-to-sqlite

done! ✨ 🌟 ✨

PC@PC:~/reddit$ reddit-user-to-sqlite user satoshinotdead

loading data about /u/satoshinotdead into reddit.db

fetching (up to 10 pages of) comments

30%|█████████████████████████████████████████████▉ | 3/10 [00:04<00:09, 1.42s/it]

saved/updated 331 comments

fetching (up to 10 pages of) posts

0%| | 0/10 [00:00<?, ?it/s]

saved/updated 12 posts

PC@PC:~/reddit$ ls -l

total 296

-rw-r--r-- 1 PC PC 299008 jun 28 11:29 reddit.db

PC@PC:~/reddit$ pipx install datasette

installed package datasette 0.64.3, installed using Python 3.9.2

These apps are now globally available

- datasette

done! ✨ 🌟 ✨

PC@PC:~/reddit$ nano metadata.json

PC@PC:~/reddit$ datasette reddit.db --metadata metadata.json

INFO: Started server process [46765]

INFO: Waiting for application startup.

INFO: Application startup complete.

INFO: Uvicorn running on http://127.0.0.1:8001 (Press CTRL+C to quit)

INFO: 127.0.0.1:54230 - "GET / HTTP/1.1" 200 OK

INFO: 127.0.0.1:54230 - "GET /-/static/app.css?d59929 HTTP/1.1" 200 OK

INFO: 127.0.0.1:54230 - "GET /favicon.ico HTTP/1.1" 200 OK

INFO: 127.0.0.1:54230 - "GET /reddit/comments HTTP/1.1" 200 OK

INFO: 127.0.0.1:54230 - "GET /-/static/app.css?d59929 HTTP/1.1" 200 OK

INFO: 127.0.0.1:54238 - "GET /-/static/table.js HTTP/1.1" 200 OK

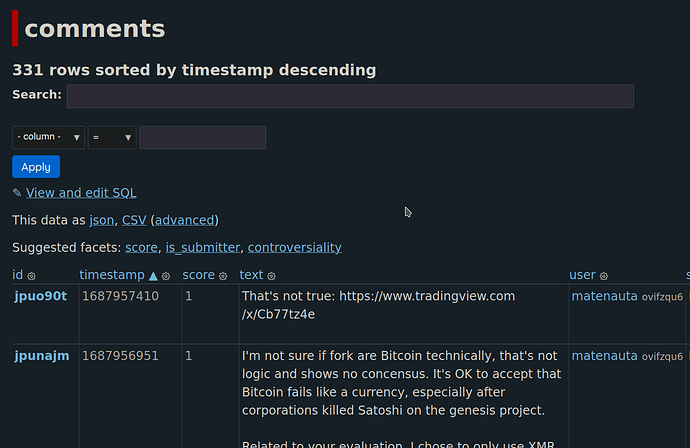

Result from datasette

Which can be read by running the app and clicking on the web address returned by the terminal http://127.0.0.1:8001:

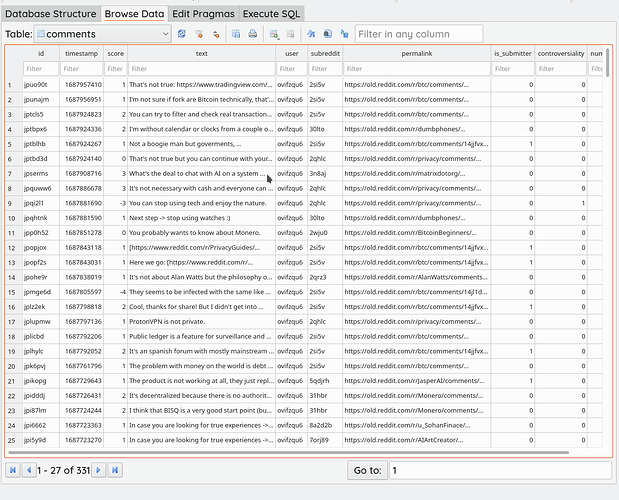

Result from SQLiteBrowser

Opening the reddit.db file generated by reddit-user-to-sqlite: